In a non-linear, accelerated, volatile and interconnected cybersphere, enterprise-wide AI adoption is safer and faster with cybersecurity guardrails.

In brief

- CISOs can manage and invest with confidence when they understand the interwoven characteristics that define today’s complex cybersecurity landscape.

- Cybersecurity functions should develop clearly defined yet adaptable guardrails to help the business deploy and accelerate adoption of AI with confidence.

Half of all organisations reported they have been negatively impacted by cybersecurity vulnerabilities introduced by Artificial Intelligence (AI) systems, the October 2025 EY Global Responsible AI Pulse survey found. The cost is high: average losses top US$4.4m for organisations that experienced AI-related incidents.

Vulnerabilities in AI systems

50%

of organisations have been negatively impacted by cyber vulnerabilities in AI systems.

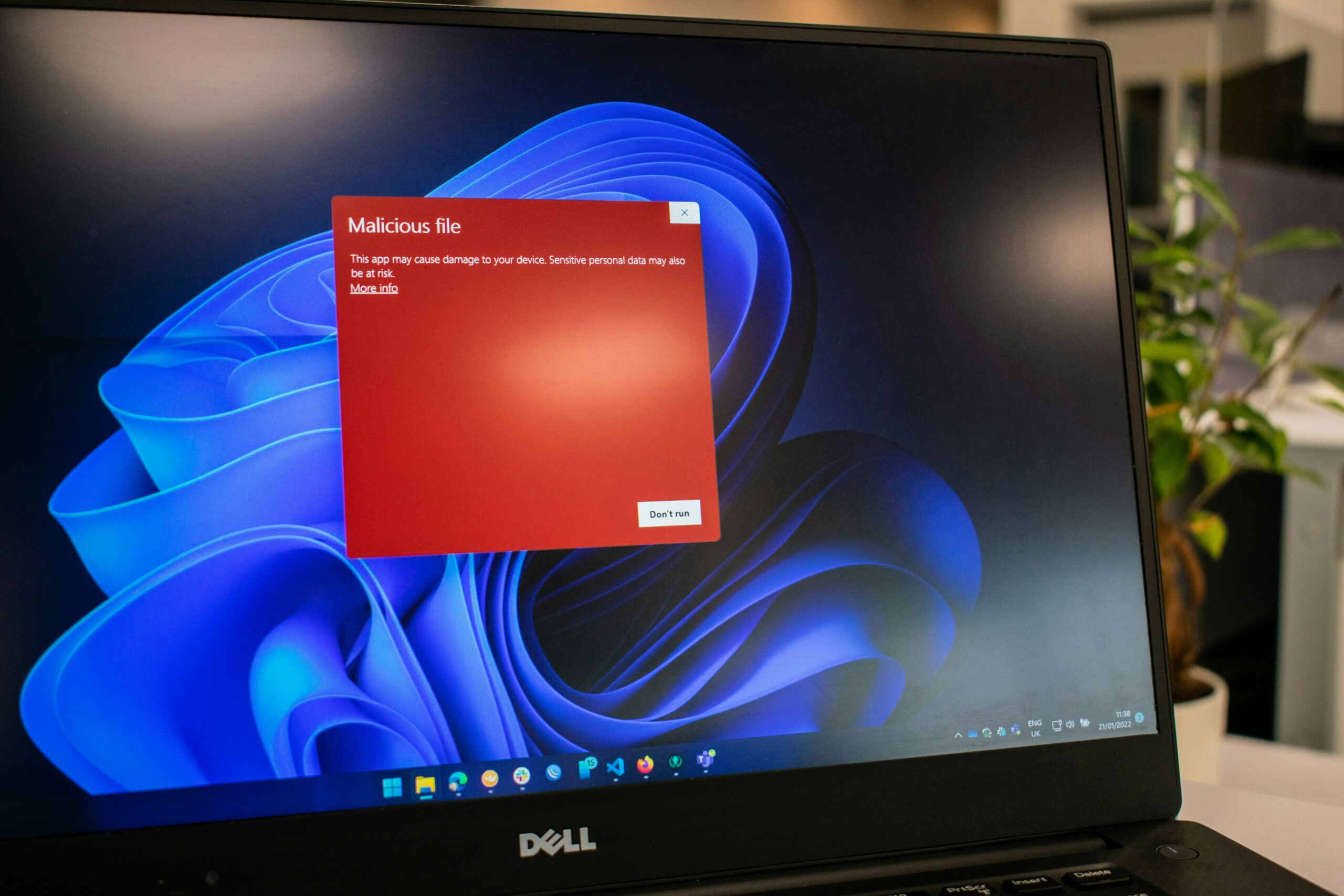

Cyber adversaries are compromising organisations by targeting AI systems across new vectors, using new methods, including data poisoning, prompt injection and model theft. AI is also part of the cyber adversary’s toolkit, increasing their speed and reach, and potentially giving them unanticipated attack methods in the near future.

In this increasingly complex cyberspace, how can CISOs help secure the rollout of AI and, in the process, increase the value the cybersecurity function contributes to the enterprise?

Drawing on EY research, interviews and secondary data, this article first frames the cybersecurity landscape within an AI-accelerated, interconnected environment, and then defines a set of cybersecurity “guardrails” CISOs can use to help confidently facilitate AI adoption across the enterprise.

Chapter 1: A New Cybersecurity Threat Landscape, Defined by NAVI

The cybersecurity landscape is becoming more non-linear, accelerated, volatile and interconnected.

Organisations are still investing heavily to bolster their cybersecurity functions. Among companies with more than US$1b in revenue, 72% spend US$10m or more on cybersecurity, with over a quarter spending US$100m or more, according to EY research, and Gartner estimates total cybersecurity spend will increase by 10% in 2025.1 But cybersecurity functions are not just spending away their problems; they are being innovative and strategic with their investments, developing AI-powered threat detection and response capabilities and better integrating security into new enterprise-wide initiatives.

This is all an attempt to stay ahead of the threat.

Cybercriminals are also well-resourced but are not bound by corporate governance rules, allowing them to experiment and innovate rapidly and relentlessly probe for weaknesses in their opponents’ defenses.

But trying to keep score in the cybersecurity arms race is a futile exercise. How do you account for a company’s perfect cybersecurity track record when it experiences a severe data breach tomorrow?

Instead, CISOs can benefit by better understanding the interwoven characteristics that define today’s complex cybersecurity landscape.

In the NAVI world, change is increasingly:

- Nonlinear, triggering sudden tipping points that can catch companies by surprise

- Accelerated, demanding increased speed of response

- Volatile, with frequent changes in direction that test companies’ agility

- Interconnected, setting off cascades of downstream impacts

By understanding the new NAVI operating environment, CISOs will be able to identify the root causes and structural trends driving change, and make better-informed decisions.

1. Non-linear

In the same way “vibe coding” with AI has made code writing possible for non-coders, “vibe hacking” has the potential to bring cybercrime to the masses. The latest AI advances represent a tipping point for cybercrime, increasing both the number of viable threat actors and the number of victims that can be simultaneously targeted.

In August 2025, Anthropic revealed that a cybercriminal used its AI coding assistant, Claude Code, to carry out a data extortion operation against 17 organisations across multiple countries, including a defense contractor, health care providers and a financial institution. At each step of the attack, the cybercriminal used Claude Code to consult and operate, supporting reconnaissance, exploitation, lateral movement and data exfiltration.

“AI lowers the bar required for cybercriminals to carry out sophisticated attacks,” said Rick Hemsley, EY UK&I Cybersecurity Leader. “Cyberattacking skills that used to take time and experience to develop are now more easily accessible, for free, for a greater number of cybercriminals than ever before.”

The increasing number of viable actors presents a challenge not just for organisations but also for their regulators. Whereas before, regulators and government agencies could focus some efforts on known groups of hackers or advanced persistent threats, AI might further decentralise the threat landscape by quickly arming new groups across new geographies or by providing “lone wolf” actors with the skills previously held by multiple actors working as a group.

Cybercriminals are also using AI to target more victims at once. Social engineering techniques like phishing, voice phishing (vishing) and deepfake vishing are most effective when they are most convincing. In the past, it took time to create a convincing lure for a victim. Now, cybercriminals can launch personalised phishing and vishing scam campaigns to many victims at once with generative AI tools. CrowdStrike detected a 442% increase in vishing intrusions in the second half of 2024, a trend expected to continue through 2025.2

Vishing intrusions

442%

increase in vishing intrusions in the second half of 2024

Future quantum computing breakthroughs might represent tipping points that trigger nonlinear change for cybersecurity. Quantum could rapidly accelerate cyberattacks by breaking widely used encryption algorithms instantly, rendering current data protection methods obsolete.

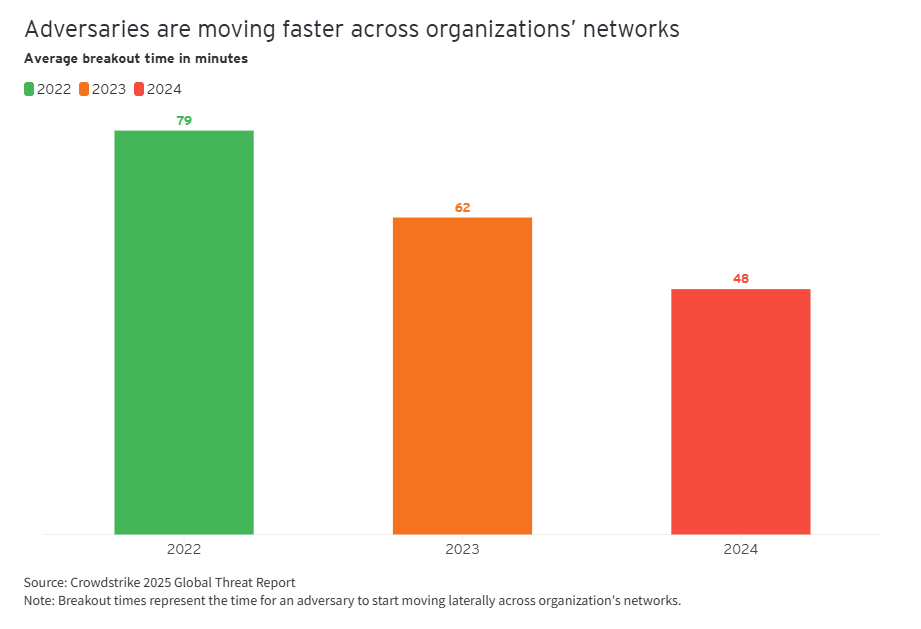

2. Accelerated

The average e-crime breakout time — the time needed for an attacker to start moving laterally across a victim’s network — was 48 minutes in 2024, down from 62 minutes in 2023 and 79 minutes in 2022, according to CrowdStrike.

Accelerating breakout times are dangerous. When attackers become established in a network, they can gain deeper control and are harder to extract. In a September 2025 cyberattack that canceled and delayed flights for days across Europe, a compromised software provider rebuilt its systems and relaunched them, only to realise the hackers were still inside the system.

Beyond breakout times, other aspects of the cybersecurity landscape are accelerating. The software-as-a-service (SaaS) market has boomed in recent years. Worldwide revenue for enterprise applications will reach US$385.2b in 2026, as estimated by IDC — a nearly 40% increase from 2022, with most of this growth attributed to investments in public cloud software. Building applications in the cloud has helped SaaS providers’ customers boost innovation and efficiency, expand rapidly and better serve customers. But accelerated product and feature rollouts to keep pace with fierce competition can come at the expense of security. Cyberattacks on smaller, fast-moving SaaS providers often impact their customers, due to data sharing and tight technology integration.

Similarly, organisations are accelerating internal AI initiatives. While doing so, leaders recognise that speed comes with risk: only 14% of CEOs believe AI data protection is strongly safeguarded in their organisations, according to a recent EY Responsible AI Pulse survey.

Only

14%

of CEOs believe AI data protection is strongly safeguarded in their organisations.

“As businesses accelerate AI and technology adoption, they should consider cybersecurity implications from the outset,” Ayan Roy, EY Americas Cybersecurity Leader, said. “Done right, cybersecurity should not slow adoption but should encourage safer, faster innovation across the business.”

3. Volatile

Increased geopolitical and regulatory volatility is impacting cybersecurity. Almost 60% of organisations said geopolitical tensions affected their cybersecurity strategy in 2025, according to the World Economic Forum.3 That isn’t surprising — recent years of increased geopolitical volatility have had many knock-on effects in the cybersphere for both businesses and governments.

Geopolitical volatility

59%

of organisations said geopolitical tensions affected their cybersecurity strategy

Leaders don’t expect this volatility to abate in the near future. More than half (57%) expect geopolitical and economic uncertainty to last longer than a year, with nearly a quarter (24%) forecasting longer than three years, according to the September 2025 EY-Parthenon CEO Outlook Survey.

Critical infrastructure — for utilities, transportation, communications and energy — can be impacted by geopolitical volatility when targeted by state-sponsored cyberattacks. These attacks ramp up tensions but don’t usually lead to conventional warfare, making them a popular method to prod a foe without declaring war. For businesses, critical infrastructure outages can lead to factory downtime, supply chain and transportation disruptions, physical asset damage and more.

These same pieces of public infrastructure can also be second-order victims of cyberattacks when a third-party supplier is targeted. Cybercriminals might be incentivised to target businesses that support high-profile pieces of infrastructure — like airports or train systems — to build public pressure for a quick fix that may come from a ransom payment.

Regulatory volatility also impacts cybersecurity for organisations. “Politics are realigning and growing more polarised, increasing the likelihood of significant swings in policy from one election to the next,” said Catherine Friday, EY Global Government & Infrastructure Industry Leader.

When it comes to regulation, the cyberspace is not borderless. So, for multinational companies, the picture is especially complex. This is currently in focus with AI regulation, which is at different stages in different parts of the world, resulting in an ever-changing patchwork of policies to comply with.

“Multinational companies face complex cybersecurity, AI, data and other technology regulations from multiple jurisdictions,” Piotr Ciepiela, EY Global Government and Infrastructure Cyber Leader, said. “The smartest companies design compliance into their technology, so they can respond to regulatory volatility with adjustments, not overhauls.”

4. Interconnected

Organisations thrive when they form strong partnerships with suppliers. Cybercrime thrives on large attack surfaces, like those formed by an interconnected ecosystem of third parties with varying levels of cybersecurity maturity.

As organisations build internal AI functions, most rely on third parties for large language models (LLMs), since building LLMs from scratch is expensive and requires massive compute resources.

This hybrid approach to AI development — rapid development of internal tools using external resources — is no different from how other internal technologies are developed. But the tradeoff is increased cybersecurity risk. According to the 2025 EY Global Third-Party Risk Management Survey, TPRM programs scan for cybersecurity risk more often than any other risk.

Organisational complexity is also increasing. “In a world where organisations are becoming more complex and interconnected, within a cyber landscape that is ever-changing, the stakes for CISOs are raised. They not only need to ensure that enterprise-wide AI initiatives are secure, but they also need to secure their ecosystem in collaboration with third parties,” Rudrani Djwalapersad, EY Global Cyber Risk and Cyber Resilience Lead, said.

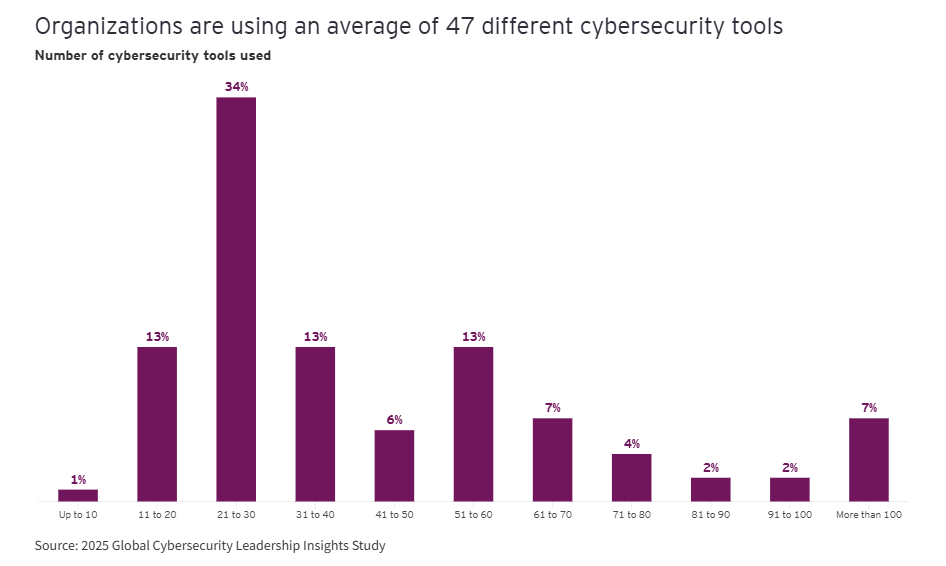

Just within the cybersecurity function, organisations use an average of 47 tools, according to EY research. On an even more granular level, employees recognise risks in their AI experimentation: EY research (via ey.com US) found that 39% of them are not confident in using AI responsibly.

Chapter 2: Cybersecurity Guardrails to Improve Enterprise-wide AI Adoption

A NAVI world poses vexing cybersecurity challenges for AI adoption. Done right, the cybersecurity function can increase both speed and security of adoption.

Nearly every business function makes a case to be involved “from the outset” of AI initiatives, each for good reason. For the cybersecurity function, “shifting left” — performing security testing earlier in the software development lifecycle — is both a compelling mantra and an effective policy to safeguard new technology. But for technologists who want to “move fast and break things” and for business leaders who want to beat the competition to market, it can seem unwieldy.

Simply shifting left also isn’t an effective strategy for minimising cybersecurity risks in the NAVI world. Shorter technology development cycles and highly adaptable cybercriminals demand a more resilient approach to cybersecurity.

It is more effective to focus on a set of clear cybersecurity “guardrails” that help increase both speed and security of AI adoption. Guardrails are a clearer, more adaptable way to embed security into AI initiatives — one that integrates into existing systems, accelerates adoption and gives stakeholders confidence that key risks are being managed from day one. Guardrails are also more compatible with responsible AI initiatives. Both programs aim to build trust and manage risk, and their integration strengthens each while amplifying visibility and support across the enterprise.

Cybersecurity guardrails help leading CISOs better integrate into key strategic decisions, earlier. And early integration leads to larger value creation from the cybersecurity function, as our 2025 EY Global Cybersecurity Leadership Insights Study found.

Here are five guardrails that leading CISOs are using to create value in their organisations and mitigate cybersecurity risks in the NAVI world:

- Safeguard the human risk factor

Leading CISOs are minimising human risk factors by protecting the human-AI interface and reducing opportunities for employees to be exploited as the weakest link. “Technology alone can’t secure an organisation. Companies that invest in reducing human risk through awareness, culture and accountability will be far more resilient against modern cyber threats than those that rely solely on tools,” said Bill Fryberger, EY Americas Cybersecurity Advisory Leader.Organisations are implementing stronger identity and access controls, modernised insider threat programs and rolling out tailored, risk-based awareness training to their employees to curb human error, prevent social engineering campaigns and avoid misconfigurations that could lead to breaches.Risk mitigated:

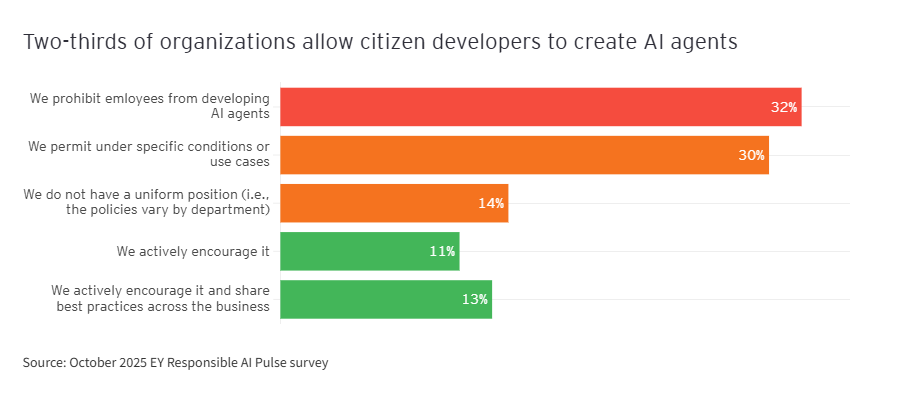

- Human error: Exploiting human error may increase as employees independently “toy around” with AI tools in their day-to-day tasks. According to the October 2025 EY Responsible AI Pulse survey, 68% of organisations allow “citizen developers” (employees independently developing or deploying AI agents). However, only six in 10 provide formal guidance to their employees.

- Targeted AI vishing, phishing and social engineering campaigns: Already an effective method to gain unauthorised access in a cyberattack, these campaigns are on the rise — AI-generated phishing emails rose by 67% in 2025.4

- Inadvertent misconfigurations that cause data breaches.

2. Secure data used in AI Initiatives

Data is the foundation of any AI system, so leading CISOs are working to secure every type, whether it is user input data to help tailor results for organisational contexts, training and fine-tuning data to help build foundational models, or labeled data for validating model outputs. They focus on safeguarding the confidentiality, integrity and availability of data — deploying defenses against data poisoning and injection attacks, tightening controls on third-party and sensitive data, and applying strong encryption to reduce exposure of confidential information and ensure AI systems are trained on trusted sources.

Risk mitigated:

- Data poisoning: Data poisoning can reduce model accuracy by up to 27% in image recognition and 22% in fraud detection, making it a high priority for CISOs to address.5

- Use of confidential, sensitive or Personally Identifiable Information (PII) data to train AI systems and agents: See below case study to learn how Microsoft 365 Copilot maintains strict data standards.

- Data leaks from AI outputs: AI can reveal sensitive information by accident or on purpose. For instance, in a recent prompt injection challenge, 88% of participants were able to trick GenAI into giving away sensitive information.6

Case study: How Microsoft 365 Copilot maintains data confidentiality, integrity, and availability

Microsoft estimates around 70% of Fortune 500 companies use Microsoft 365 Copilot.7 Copilot, in turn, uses data from its users to enhance their creativity, productivity and skills. It can draw on user data from Microsoft Outlook, Microsoft PowerPoint and other Microsoft 365 apps to make previously complex, time-consuming tasks easier. While Copilot — and all similar AI products — depends on data, its users depend on Microsoft to maintain data confidentiality, integrity and availability.

Confidentiality

Microsoft enforces strict confidentiality across its AI ecosystem through layered enterprise controls. User prompts and responses in Copilot are encrypted both at rest and in transit, inherit user-specific identity and permissions, and are processed within service boundaries that offer enterprise data protection. Sensitive data is shielded through Microsoft Purview Data Loss Prevention, which prevents protected files from being ingested or surfaced by AI systems without appropriate rights. Additionally, customer data — including prompts and outputs — is not used to train foundation models unless a customer explicitly opts in.

Integrity

To preserve data integrity, Microsoft embeds auditing, classification and lifecycle management across all AI interactions. Every Copilot prompt and response is captured in the unified audit log, tied to retention and deletion policies, and available for eDiscovery. Files referenced during AI interactions carry forward their sensitivity labels and information protection settings, preventing unauthorised use or modification. By combining classification with encryption and customer-controlled policies, Microsoft ensures that AI systems process data consistently with organisational governance standards, and that administrators can trace and validate the provenance of AI outputs.

Availability

Microsoft maintains high availability of AI data and services through the resilience of its cloud infrastructure and user-specific storage architecture. Copilot activities are stored within the user’s Office 365 environment, ensuring that data remains accessible under the same reliability guarantees as the broader Microsoft Cloud. In practice, this means users retain seamless access to Copilot functionality, while being notified in real time if the service is unavailable, aligning AI reliability with enterprise expectations for mission-critical productivity platforms.

3. Re-engineer AI threat detection and response

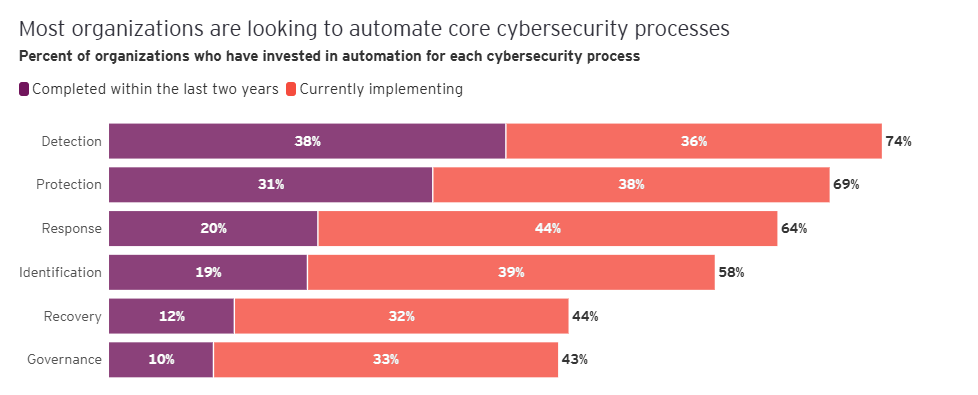

Leading CISOs are re-engineering AI threat detection and response by unifying visibility and defense across the entire AI attack surface. Three-quarters of organisations are currently working to automate their cybersecurity detection processes, according to EY research. They are applying AI-driven monitoring, automated response and enhanced threat intelligence to block prompt injections, sanitise outputs, redact sensitive data, mitigate denial-of-service attempts and contain overprivileged agents. This integrated approach helps organisations quickly detect, respond and adapt to malicious activity targeting AI systems and their supply chains. The banking sector is especially advanced with agentic AI, with more than half of executives saying agentic AI systems are highly capable of improving cybersecurity, according to an MIT Technology Review study in association with EY.

Risk mitigated:

- Cyberattacks on AI systems and AI supply chains: CISOs are increasingly aware of higher cybersecurity risk with AI systems. For instance, 76% of organisations that use AI in their audits perceive higher cyber risk.8

- Excessive agency or inappropriate output from AI agents

CoreWeave, a specialised GPU cloud provider, delivers accelerated computing for demanding workloads such as VFX, pixel streaming and generative AI. To support these operations, the company required a security platform capable of scaling seamlessly while avoiding any performance slowdowns that could undermine its reputation for speed and efficiency. After a successful proof of concept, CoreWeave adopted the CrowdStrike Falcon platform, deploying multiple Falcon modules.9

Deployment occurred within two weeks, with Falcon sensors installed across all CoreWeave worker nodes. This gave the company broad visibility into endpoints, cloud nodes, applications and services, while ensuring continuous protection without slowing down workloads.

CoreWeave’s incident response process then began to follow three steps: detect, investigate and triage. Alerts are generated by Falcon OverWatch, CrowdStrike’s 24/7 managed threat hunting service, as well as by internal security teams monitoring Falcon dashboards. Once an alert is raised, analysts use detailed telemetry such as hostnames, container IDs and host IDs to trace and isolate issues within the infrastructure. This enables CoreWeave not only to remediate threats quickly but also to collaborate with customers whose workloads might be affected, containing risks before they escalate.

By running a single, lightweight agent across its environment, CoreWeave reduces complexity and streamlines provisioning, while CrowdStrike’s automation often neutralises threats instantly. In situations requiring human response, the platform provides rich context and intelligence, enabling decisive action.

CrowdStrike helps CoreWeave meet escalating AI-era demand while maintaining a high standard of security. CrowdStrike provides the scalability, visibility and threat intelligence needed to protect both CoreWeave’s own infrastructure and its customers’ workloads. The result is a cloud environment that remains fast, resilient and secure, empowering CoreWeave to fuel the ongoing AI boom.

4. Mitigate AI supply chain threats

The interconnected nature of AI development demands organisations to build strong third-party risk management and attack surface visibility. CISOs are mitigating AI supply chain threats by implementing transparency, visibility and minimum-security standards across third-party providers and AI components. They are strengthening asset management, applying rigorous third-party risk controls and using cryptographic verification of models to reduce hidden dependencies and vulnerabilities introduced through external AI software.

Risk mitigated:

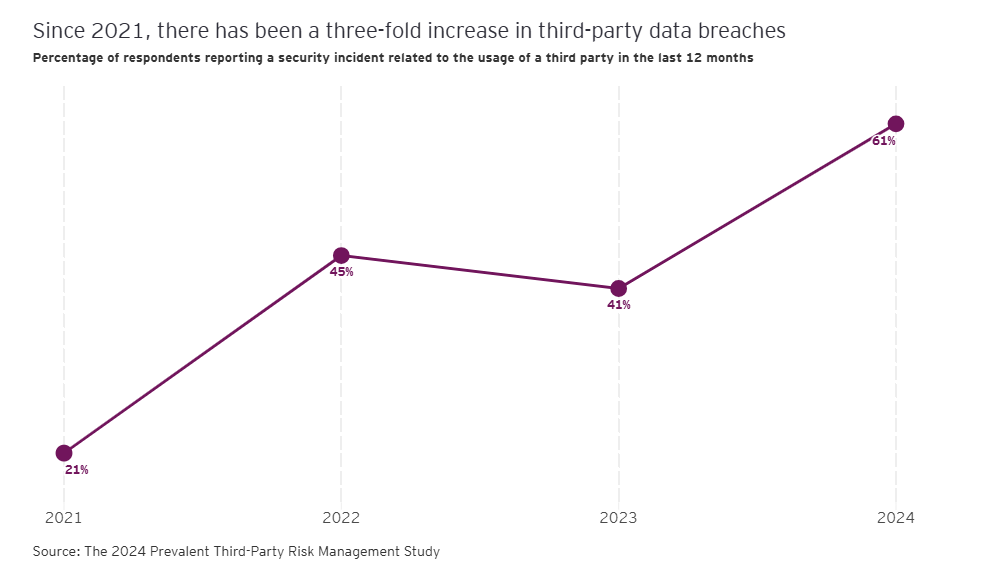

- Complexity, hidden dependencies and additional vulnerabilities: The threat is real — 61% of companies experienced a third-party breach in the past year.10

JPMorgan Chase’s CISO wrote an open letter to its third-party suppliers in April 2025 warning that the current SaaS model is creating vulnerabilities that are “weakening the global economic system.”

The current SaaS ecosystem’s critical vulnerability — as highlighted by the letter — is that organisations have “little choice but to rely heavily on a small set of leading service providers,” which concentrates cybersecurity risks in a way that the previous, more diverse, distributed ecosystem did not.

Additionally, organisations today integrate with third parties through identity protocols that require direct interactions between the organisation’s sensitive internal resources and the third party’s services. In the past, these interaction layers were separated from the organisation’s core systems and sensitive data, creating a boundary that protected the organisation.

The problem is getting worse, not better, according to the letter. It lists several emerging vulnerabilities:

- Inadequately secured authentication tokens vulnerable to theft and reuse

- Software providers gaining privileged access to customer systems without explicit consent or transparency

- Opaque fourth-party vendor dependencies silently expanding this same risk upstream

These challenges require decisive, collective and immediate action, the letter states. Third parties must treat security as equal to innovation and must deliver secure-by-default SaaS with continuous proof of controls, transparent risk management and options like confidential computing, self-hosting or bring-your-own-cloud to make integration more secure.

The letter’s three calls to action were:

- Software providers must prioritise security over rushing features. Comprehensive security should be built in or enabled by default.

- We must modernise security architecture to optimise SaaS integration and minimise risk.

- Security practitioners must work collaboratively to prevent the abuse of interconnected systems.

For JPMorgan Chase, the largest bank in the US, doing business with third parties is a critical element of its sustained success. But, for the sake of the global economic system and all the banks and third-party services providers within it, doing business should be more secure than it currently is.

5. Harden AI systems

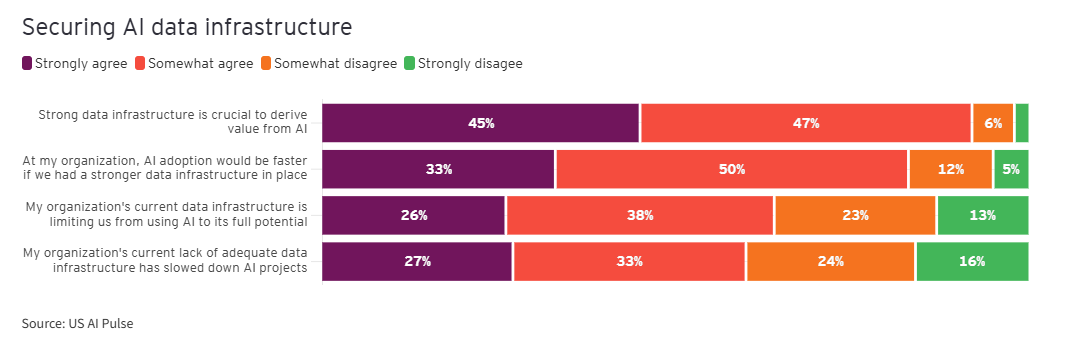

Organisations are working with their CISOs to harden AI systems by embedding security throughout the development and deployment lifecycle — from design to operation. They recognise the criticality of this step: 83% of leaders say AI adoption would be faster if they had stronger data infrastructure in place, according to EY research (via ey.com US). “Integrating the right security controls into an AI deployment and hardening AI systems helps the cybersecurity team set the tone for the entire organisation, establishing the team as a role model for implementing responsible AI,” said Dan Mellen, EY Global Cyber Chief Technology Officer.

As a result, organisations are integrating secure coding and model governance into machine learning operations (MLOps), applying adversarial testing and red teaming, and enforcing strong configuration, segmentation and vulnerability management standards. This approach reduces errors and misconfigurations, protects against infrastructure-level weaknesses, and helps ensure AI models and agents are deployed on resilient foundations.

Risks mitigated:

- Errors, misconfigurations and vulnerable code from fast-paced development and lack of expertise: Cloud and AI misconfigurations are exceptionally common, with 98.6% of organisations stating they have critical cloud misconfigurations.

- Underlying infrastructure vulnerabilities: Weak infrastructure is both a risk and a hinderance to the AI rollout — according to EY research (via ey.com US), 67% of leaders say that inadequate infrastructure is actively holding back their AI efforts.

Taken together, this set of cybersecurity guardrails will help CISOs both secure the AI rollout across the enterprise and generate more value from the cybersecurity function. By focusing guardrail investments on clear value-driving areas, CISOs can promote their function within the organisation and rapidly enhance their cybersecurity capabilities to keep pace with the NAVI world.

Summary

While organisations develop internal AI programs and partner with AI providers, the cybersphere is becoming increasingly non-linear, accelerated, volatile and interconnected. CISOs should develop cybersecurity guardrails to help secure AI adoption across the enterprise.

AnnMarie Pino, Associate Director, Ernst & Young LLP; William Reid, Assistant Director, Ernst & Young LLP; and Joe Morecroft, Associate Director, EYGS LLP, contributed to this article.

- Gartner Forecasts Worldwide End-User Spending on Information Security to Total $213 Billion in 2025, 29 July 2025, https://www.gartner.com/en/newsroom/press-releases/2025-07-29-gartner-forecasts-worldwide-end-user-spending-on-information-security-to-total-213-billion-us-dollars-in-2025.

- CrowdStrike 2025 Global Threat Report, https://www.crowdstrike.com/en-us/global-threat-report/

- Global Cybersecurity Outlook 2025, World Economic Forum, https://www.weforum.org/publications/global-cybersecurity-outlook-2025/

- AI Cyber Attacks Statistics 2025: Attacks, Deepfakes, Ransomware; SQ Magazine; 7 October 2025; https://sqmagazine.co.uk/ai-cyber-attacks-statistics/

- IBM, https://www.ibm.com/think/topics/data-poisoning

- Dark Side of GenAI Report, Immersive Labs, 21 May 2024, https://www.immersivelabs.com/resources/press/immersive-labs-unveils-new-dark-side-of-genai-report-about-how-people-trick-chatbots-into-exposing-company-secrets

- Microsoft customers share impact of generative AI, Microsoft, 19 November 2024, https://news.microsoft.com/source/2024/11/19/microsoft-customers-share-impact-of-generative-ai/

- Protiviti-IIA Survey, 8 October 2024, https://www.protiviti.com/us-en/press-release-ai-systems-elevate-cybersecurity-and-data-risks

- How CoreWeave Uses CrowdStrike to Secure Its High-Performance Cloud, CrowdStrike, 13 November 2023, https://www.crowdstrike.com/en-us/blog/how-coreweave-secures-cloud-with-crowdstrike/

- 2024 Third-Party Risk Management Study, Prevalent, https://info.mitratech.com/hubfs/Other/M-and-A/Prevalent/documents/2024-Third-Party-Risk-Management-Study.pdf

The article was first published by EY.

Photo by Adi Goldstein on Unsplash.

5.0

5.0