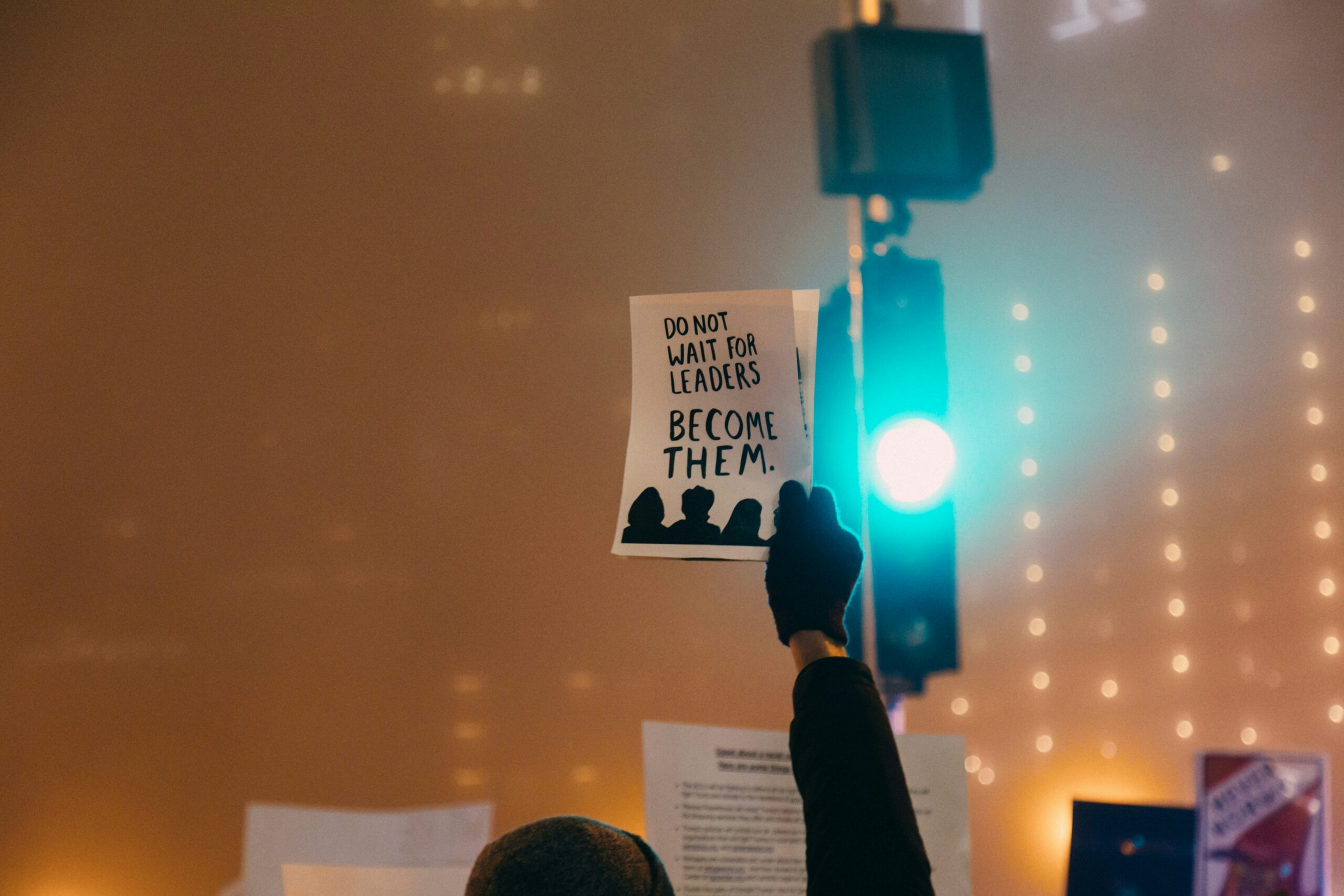

If your organization aims to create sustainable value for society, as a board member, it’s your role to build and safeguard trust in AI.

In brief

- Trusted AI has huge potential to create long-term value for all stakeholders. But the cost of unethical application could be very high.

- AI regulation should protect organizations as well as consumers. As a board member, you need to make sure your organization’s voice is heard.

- Your role is to understand AI technologies vis-à-vis the organization’s strategy to mitigate their risks — and strengthen the governance around ethical use

The chances are your organization has been using artificial intelligence or is actively considering its use for a myriad of tasks; anything from answering a customer’s query on an app to streamlining and speeding up a number of back-office processes. Yet despite this, senior leaders, including board members like you, often have only a limited understanding of how AI works. And more pointedly, they don’t regularly discuss its use and application within the organization to ensure that ethical standards are adhered to.

Admittedly, as a board member, keeping up with the constant evolution of AI is hard and the learning curve is steep. At the EY Center for Board Matters, we wanted to help by exploring the link between deploying trusted AI and delivering long-term value. So, we interviewed a number of experts to learn more and kick off the first film in our Board Imperative Series.

The film provides critical answers and actions to help board members reframe the future of their organization. In parallel, in this article, we examine your role in building and safeguarding trust in AI. We then consider how you can minimize your organization’s exposure to AI risks and, ultimately, help it do well by doing good.

We realize these are complex tasks — so if you only take away three things after reading this piece, we’d encourage you to:

- Get involved in the AI and broader technology discussion sooner rather than later and help ensure your organization thinks about and embeds AI ethics into its overall AI strategy.

- Learn enough about these technologies to be able to contribute to the discussion by asking your management team the right strategic questions to optimize the technology and minimize your risks.

- Make sure your organization’s voice is heard and feeds into discussions and consultations around any potential associated regulation.

Chapter 1: AI Has Huge Potential to Help Create Sustained Value for All

Get AI right and it can build stakeholder trust. Get it wrong and the risks are existential.

So, what exactly is the link between AI and long-term value?

A responsible organization thinks and acts in the long-term interests of all its stakeholders — from employees and suppliers to regulators and local communities.

That means board members like you must engage with those stakeholders and consider their interests when making decisions. In doing so, you support your organization in creating sustained value for its stakeholders and earning their ongoing trust. That, in turn, makes it more attractive to consumers, investors and prospective employees.

AI has tremendous potential to help create this sustained long-term value — as long as you get it right. It can help you build trust by providing fair outcomes for everyone coming into contact with it, or for whom it makes decisions. It can also contribute to other important elements of a responsible business, such as inclusivity, sustainability, and transparency.

But rapid digitalization — particularly the rise of customer analytics — has raised questions around discrimination and fairness that risk destroying that trust. In a world where inequalities are more evident than ever, an AI technology that proves biased against particular groups, or prevents certain outcomes, is very bad news. As such, the risks of getting AI wrong aren’t only serious — they can impact the future of an organization.

As the lifeblood of AI, data is key to building and keeping trust

Ethical AI isn’t just about preventing bias, though. It’s also about privacy. “The lifeblood of AI as it’s currently developed is data,” says Reid Blackman Ph.D., Founder and CEO of Virtue Consultants. “Companies have to balance the need to collect and use that data with ensuring they have consumer trust – so consumers continue to feel comfortable sharing it.”

To do this, boards need to make sure their organizations are clear and transparent about their approach to data, so consumers can give informed consent.

This will create a virtuous circle: the more consumers trust you, the more data they’ll share with you. And the more data they share, the better your AI — and ultimately, your business outcomes.

On the flipside, organizations that don’t treat their data with care can create a vicious cycle. It can take only one mistake, or perceived mistake, for a user to stop trusting your organization. If this happens, they may share less data, making your AI less effective — or even desert you for good.

Boards have a vital role to play in protecting their organizations from this vicious cycle. As Reid says, “Boards of directors have a responsibility to ensure that the reputation of their brand is protected.”

“Companies have to balance the need to collect and use data with ensuring they have consumer trust – so consumers continue to feel comfortable sharing it.”

Reid Blackman Ph.D, Founder and CEO of Virtue Consultants

Boards need to be more constructive and contributory as AI strategies evolve

From day one of developing your AI strategy, it’s crucial you help management understand the risks and opportunities these technologies bring — and how ethics influence them both. That way, you can help them build the trust they need to embrace AI fully in the organization.

But to do that, you need to understand and trust AI yourself. And, as of today, the trust gap could be partly what’s holding organizations back.

According to John Thompson, Chairman of the Board at Microsoft, a lack of knowledge on the board is one reason for this. “There aren’t enough people that know the technology and understand its applicability, and therefore how it can be used in a meaningful way for the organization overall,” he says. “So making sure that the board is knowledgeable about the platform, and has a point of view, is a critical issue.’’

It’s worth bridging this trust gap in your organization to help prevent negative news stories from damaging your brand. It could also allow you to support your organization in deploying AI technologies that are human-centric, trustworthy, and serve society as a whole.

3 Ways to Make Sure AI Helps Create Long-Term Value

- Work with management to establish the objectives and ethical principles of AI use in your organization. That should include how you’ll collect, store and use data, as well as how you’ll communicate your design principles to both internal and external stakeholders. Then make sure those principles are built into your AI strategy from the start, not bolted on after.

- Educate yourself by getting involved early. Depending on the sector you’re in, you’ll need to understand less or more about these technologies to manage associated risks and contribute to discussions about their use and application. In some instances you may also need to play an active role in overseeing how the AI strategy is developed and carried out. If not, at a minimum, you’ll need to hold the operations team to account, and ask them the right questions to make sure they align with the ethical principles outlined in that strategy.

- Make sure management conducts scenario-plannning to assess the associated risks before rolling out a new AI technology. This could include engaging a panel of diverse stakeholders to assess and consider if they are treated equally and impacted accordingly. Your organization should then build on the findings of these panels to ensure that, in the event there are inequities or biases uncovered, they are addressed prior to launch.

Chapter 2: Regulars Need to Protect Organisations As Well As Consumer

Global standards would give consumers and organisations the same protection around the world.

The right regulation will help boards by setting parameters that make sure organizations deploy AI in a trusted way. That means a way that’s safe and fair for the consumer, as well as good for business. As Eva Kaili, a member of the European Parliament and Chair of its Science and Technology Options Assessment body (STOA), puts it: “We want regulation to benefit citizens, not just maximize profits.”

But two factors make creating appropriate regulation a challenge. First, regulators need to walk a fine line between protecting consumers and giving organizations enough room to innovate; too strict, and innovation is stifled; too lax, and consumers are vulnerable to bias or privacy breaches.

Second, AI technologies cross borders. Recognizing this, in October 2020, the European Parliament became one of the first institutions to publish detailed proposals on how to regulate AI across its member states. It’s now rewriting its draft legislation in the light of the COVID-19 pandemic.

Collaboration and dialogue will be key to creating global standards

We applaud this effort. But as Eva says: “We cannot ignore that we have to apply global standards to get the maximum benefit of AI technology.”

For Eva, these standards would need to address business to consumer, as well as business to business. So, a one-size-fits-all approach wouldn’t work. Instead, she suggests, “Different business models will have to show how they respect privacy, how they respect fundamental rights and principles and how they manage to do that by default and embed it in their algorithms. They will need to follow principles that ensure that businesses or consumers that interact at an international level will have the same protection that they have in their own country.”

The principles would also flex to reflect varying levels of risk, as Eva explains. “The concept at this point is to ensure that we will have different approaches per sector — low risk and high risk.”

“We cannot ignore that we have to apply global standards to get the maximum benefit of AI technology.”

Eva Kaili, Member of the European Parliament and Chair of its Science and Technology Options Assessment body (STOA)

Collaboration between the private sector, governments and academia is central to making sure legislation reflects how companies are using AI. And that it balances risk with commercial reality. “We have to keep an open dialogue,” says Eva. “It’s very important, since our legislative proposals are relevant to what the market needs, to make sure we will be open to listen.”

3 Ways to Encourage and Support the Right AI Regulations

- Ask probing questions of management to better understand how regulation and good practice in data and algorithmic governance can benefit your organization, stakeholders and wider society, especially if you go beyond what the regulators require.

- Make sure your organization is a part of discussions and public consultations around potential regulation as early as possible and then on an ongoing basis. Advocate for that collaboration where it doesn’t already exist.

- Ensure the relevant governance structures to support any regulation are embedded and there are mechanisms in place to stay up to date on any updates or amendments.

Chapter 3: 10 Questions You Can Ask to Help Build and Safeguard Trust in AI

To mitigate AI risks, you’ll need to build your knowledge and strengthen governance and oversight around its use and application.

It’s clear that governing the use of AI can be complex and challenging, particularly for boards outside of the tech sector. But as John Thompson says: “I think it’s unequivocal that AI will, in fact, be an important technology platform for every company around the world.”

That means you’ll need to make sure the AI technologies your organization designs and deploys are unbiased and safeguard the organization against exposure to associated risks. To do that, you’ll need to educate yourself to understand these technologies better, and strengthen the governance around their use.

“I think it’s unequivocal that AI will, in fact, be an important technology platform for every company around the world.”

John Thompson, Chairman of the Board at MicrosoftThese questions should help you kickstart or re-evaluate the current process in your organization, so that trusted AI is established to create long-term value for all.

- How can we ensure our early involvement and continued commitment to our organization’s AI strategy?

- Do we understand our specific role in establishing objectives and principles for AI that help protect our organization against unintended consequences? Do we have adequate processes and procedures in place to react quickly in case of AI failures?

- Do we have the right set of skills to guide management in making the right decisions about AI, trust, ethics and risks? Can we bridge any gaps with internal training or do we need to consider external resources? What’s our plan for continuous upskilling and training?

- Have we made transparency and accountability a top priority when it comes to AI? If yes, where can our principles be found? If not, what’s our plan to address this?

- Are we confident the current governance structure is sufficient to effectively oversee our organization’s use of AI, whether developed in house or acquired? Should we consider a special committee to provide enhanced governance and focus?

- Are we consulting a diverse group of stakeholders, including end users, to challenge and test the objectives we’ve set for our AI applications? Are we regularly communicating with the operating team to make sure these objectives align with how they’re developing and deploying AI?

- How do we ensure that our AI operations teams factor in compliance and risk management from the earliest stages of development?

- To what extent do we work with governments to understand AI regulatory developments in our sector, and make sure we’re aligned? Should we consider heightned engagement if our current levels are low or non-existent?

- How do we identify and learn from early adopters and regulation pioneers to aide our decision making on how best to use and govern these technologies within our organizations?

- Are we doing enough with AI technologies to move from remaining competitive to also ensuring that we create long-term value? If not, which of the issues identified here are standing in our way?

The views of third parties set out in this publication are not necessarily the views of the global EY organization or its member firms. Moreover, they should be seen in the context of the time they were made.

Acknowledgment

EY would like to give a personal thanks to Reid Blackman, Eva Kaili and John Thompson for their time and insights shared in the film.

The article was first published here.

Photo by Markus Winkler on Unsplash.

5.0

5.0